1. Purpose

1. Purpose

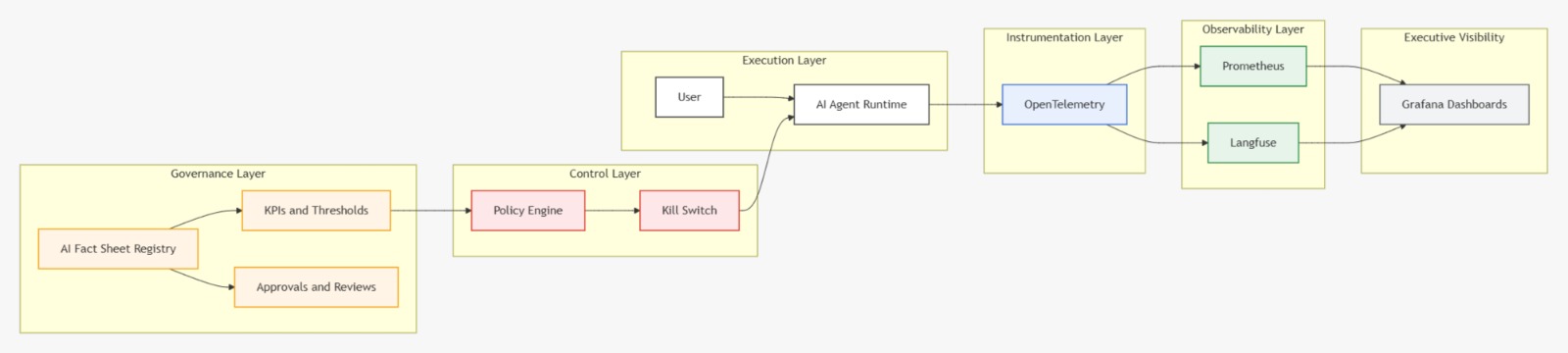

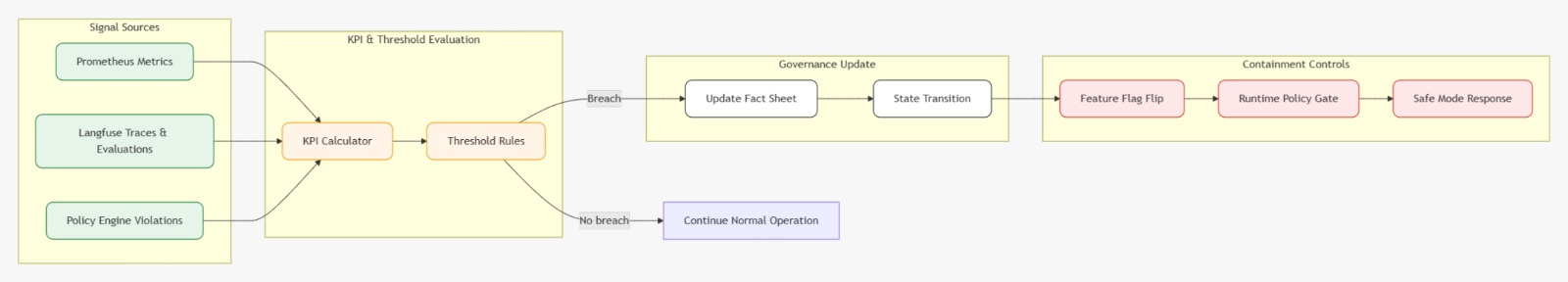

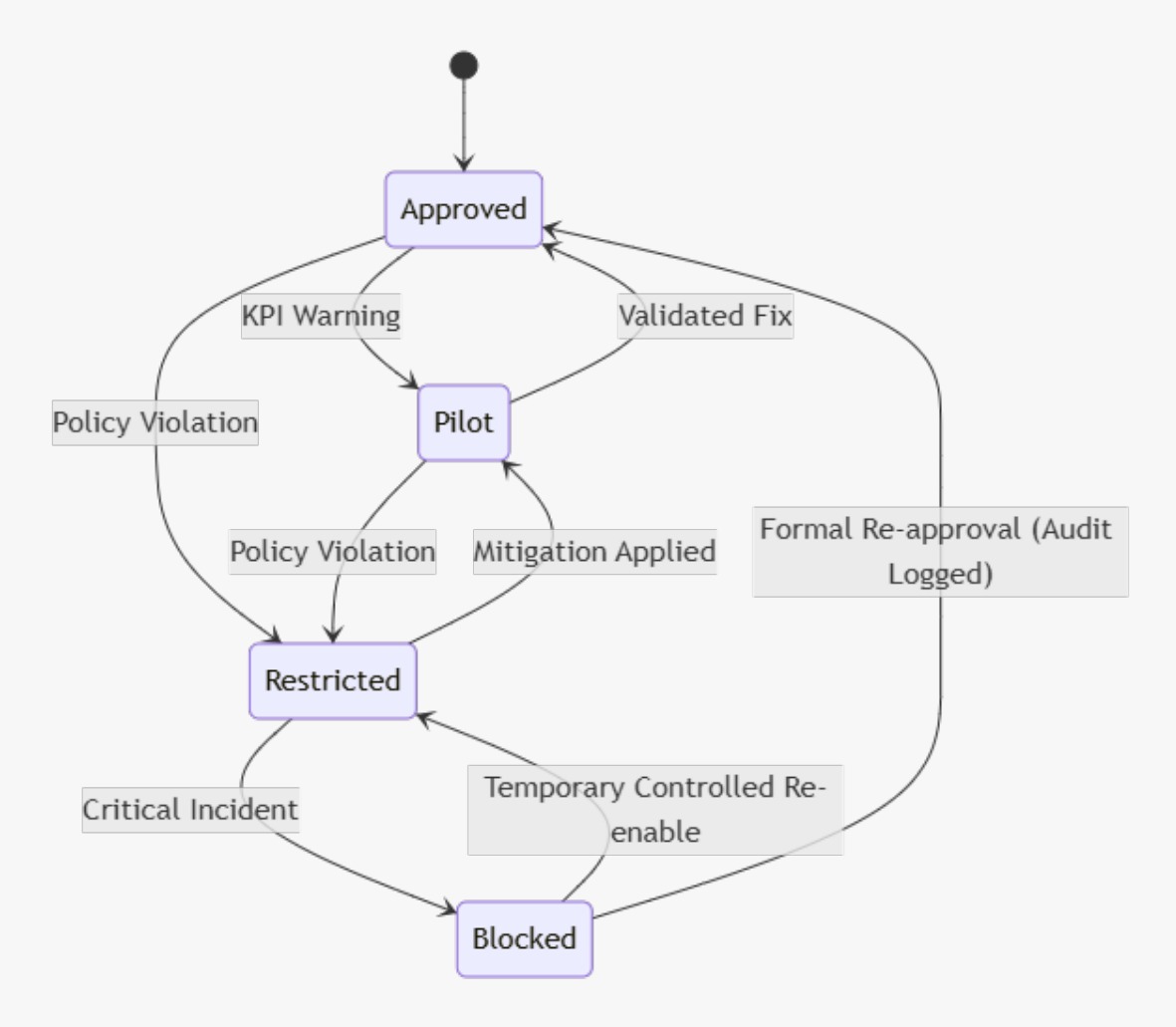

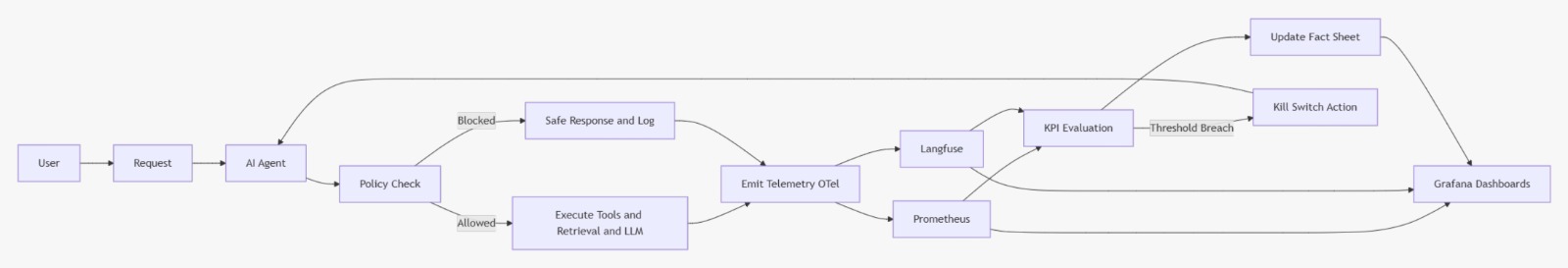

This document defines the architecture, tools, flows, and responsibilities required to implement a mature observability + governance stage for an AI Agent, connecting:

- Technical telemetry (health, latency, error rate)

- LLM/agent quality signals (traces, prompts, token/cost)

- Governance artifacts (AI Fact Sheets)

- Risk controls (Policy Engine)

- Rapid containment (Kill Switch)

Goal: transform an experimental agent into an enterprise asset that is auditable, controllable, secure, and operationally reliable.

3. Problem Statement

Without an integrated stack, AI agents typically:

- Fail silently (no early detection)

- Hallucinate without accountability

- Create unpredictable token/cost burn

- Lack auditability and change history

- Cannot be safely contained during incidents

Typical outcome: reputational, financial, compliance, and security risk.