Executive Summary — From IT Governance to AI Governance (and Why AGSC Must Have “Power to Pause”)

Executive Summary — From IT Governance to AI Governance (and Why AGSC Must Have “Power to Pause”)

Before AI, mature organizations learned a critical lesson: technology without governance scales risk faster than it scales value. AI governance is not a new concept—it is governance applied to a more volatile class of technology that introduces probabilistic behavior, emergent outcomes, drift, and delegated decision-making.

1) Governance in the IT World: The Foundation

In mature IT organizations, governance exists to ensure that technology: supports business objectives, operates within risk appetite, meets regulatory and security requirements, and has clear accountability for decisions and outcomes. This is why enterprises established bodies such as IT Steering Committees, Architecture Review Boards (ARBs), Risk & Compliance Committees, and Change Advisory Boards (CABs). These bodies do not build systems—they decide what gets approved, under what conditions, with which controls, and when something must stop.

2) Core Governance Questions (IT → AI)

Every governance model—explicitly or implicitly—answers four questions:

- Who decides? (authority)

- What is being decided? (scope)

- Based on what criteria? (standards, risk, value)

- What happens if controls are missing or violated? (enforcement)

3) Why AI Requires a Dedicated Governance Body

AI systems—especially agentic and semi/autonomous systems—introduce properties that traditional IT governance was not designed to handle:

- Probabilistic behavior (not deterministic outputs)

- Emergent outcomes (not fully predictable interactions)

- Continuous drift (performance and behavior change over time)

- Decision delegation (actions move from humans to machines)

- Blended risk domains (technical + ethical + legal + reputational)

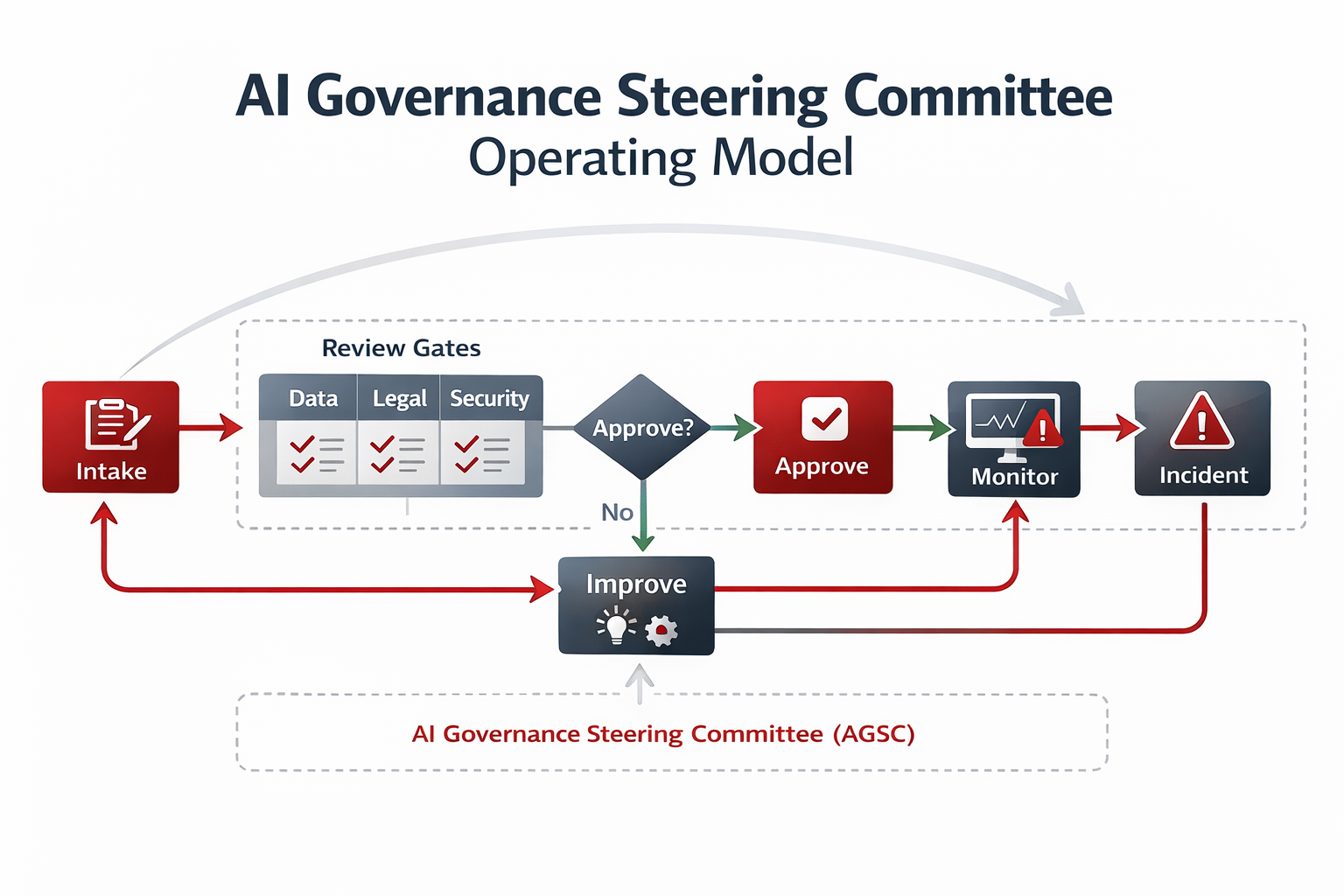

4) AI Governance Steering Committee (AGSC) — Expanded Statement

Establish an AI Governance Steering Committee (AGSC) with explicit decision authority over AI use cases, model and agent approvals, autonomy levels, risk acceptance, and production readiness. The AGSC must operate at the same organizational level as enterprise risk and compliance committees, ensuring AI decisions are governed with the same rigor as financial, cybersecurity, and regulatory risks.

The committee must be empowered to delay, restrict, or pause AI deployments when governance, security, data, or oversight controls are incomplete or ineffective—regardless of delivery pressure or business urgency.

5) What the AGSC Is (and Is Not)

| What the AGSC is | What the AGSC is not |

|---|---|

|

|

Governance fails when it is advisory. Governance works when it has authority.

6) Core Responsibilities of the AGSC (Lifecycle Decisions)

| Responsibility | What the AGSC decides | Evidence expected |

|---|---|---|

| Use Case Approval | Alignment to strategy, autonomy appropriateness, affected stakeholders understood | Intake packet, stakeholder map, tier assignment, intended-use statement |

| Model / Agent Approval | Evaluation completed, data sources approved/classified, guardrails defined | Evaluation results, data approvals, tool allowlist, constraints |

| Risk Acceptance | Residual risk within appetite, explicit acceptance owner identified | Risk register entry, mitigation status, sign-off record |

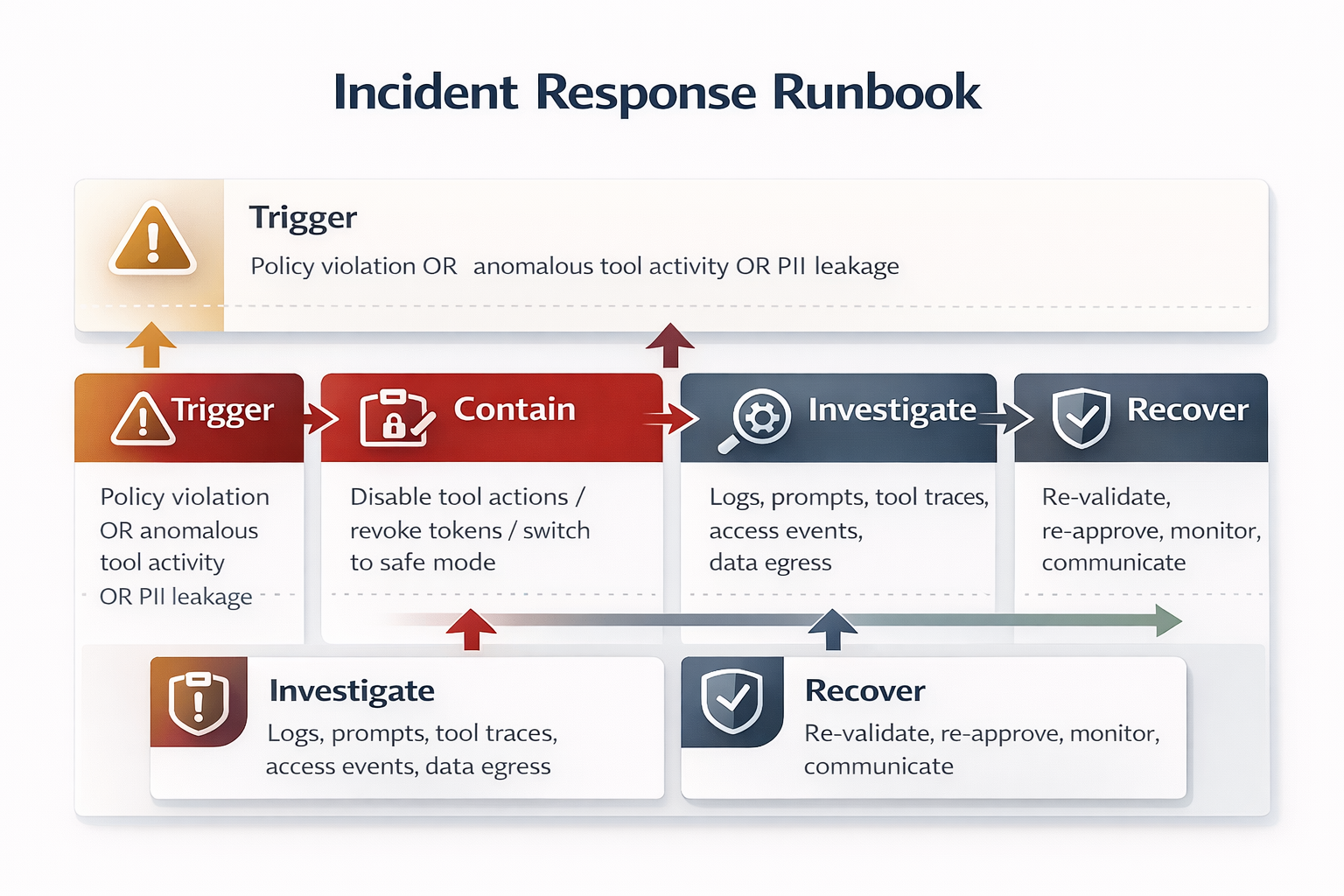

| Production Readiness | Monitoring/logging active, kill switch exists, incident response prepared | SOC runbook, telemetry proof, rollback plan, kill switch test |

| Lifecycle Oversight | Re-approval cadence, drift/performance reviews, incident-driven reassessment | Review calendar, drift dashboards, post-incident actions |

7) Core Principles of an AI Governance Framework

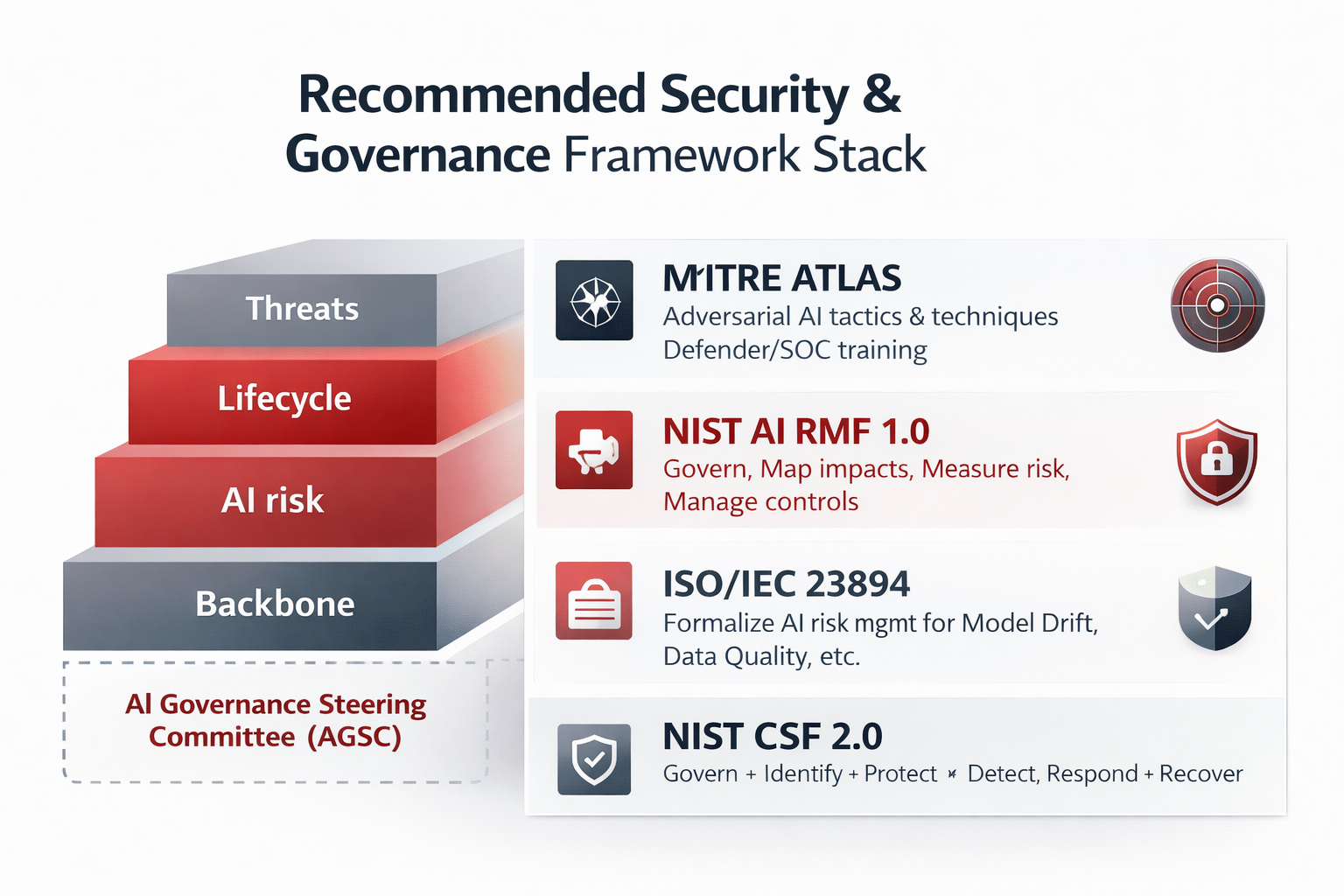

An effective AI Governance Framework rests on six core principles consistent with NIST AI RMF, enterprise risk management, and real-world practice:

| Principle | Meaning in practice |

|---|---|

| 1. Clear Accountability | Every AI system has a named business owner, technical owner, and risk owner. Shared ownership creates gaps. |

| 2. Proportional Governance | Governance intensity scales with autonomy level, impact severity, and regulatory exposure. One-size-fits-all fails. |

| 3. Human Authority Over AI | Humans remain accountable; escalation paths exist; override mechanisms are available. AI may act, but humans own outcomes. |

| 4. Lifecycle Governance | Approval is continuous. Controls are revalidated due to drift, model updates, expanded permissions, and new integrations. |

| 5. Transparency & Auditability | Systems are explainable enough to govern; logged enough to investigate; traceable to decisions and actions. |

| 6. Enforceability Over Documentation | Policies alone do not govern AI. Controls must be enforceable through platforms, workflows, and authority (pause/stop). |

8) Why “Power to Pause” Is Critical

The ability to pause or stop deployments is not optional. Without it, risk exceptions accumulate silently, temporary workarounds become permanent, and autonomy grows faster than controls. With it, governance becomes credible and teams design for compliance upfront—enabling AI to scale sustainably.

Figure — AGSC Governance Authority & “Power to Pause”

This diagram reinforces the executive message: AI governance is a decision system (not a delivery team) that approves use cases, validates controls across the lifecycle, accepts residual risk explicitly, and retains the authority to pause deployments when safeguards are incomplete.

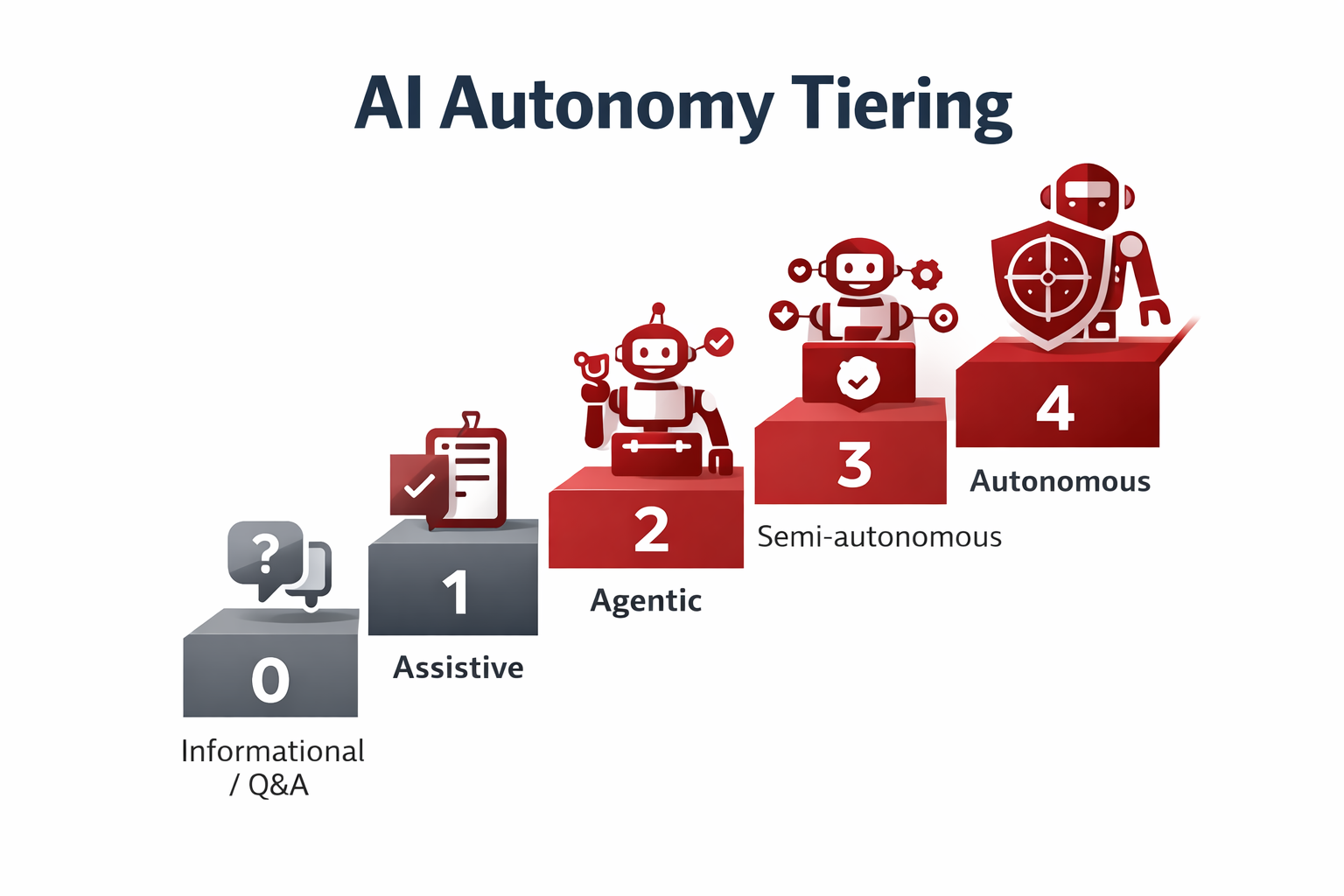

AI Autonomy Tiers & Governance Requirements

AI systems should not be governed uniformly. Governance strength must scale with the level of autonomy and the potential impact of failure. The following model defines five autonomy tiers and the corresponding governance controls required to operate them safely and responsibly.

Figure — Autonomy Tiering (Tier 0 → Tier 4)

Use tiering to standardize governance: each step up increases authority, tool access, and potential impact. This creates predictable approval gates, stronger “least privilege” boundaries, and clearer expectations for human oversight before any agent is allowed to take actions (especially Tier 2+).

| Tier | Autonomy level | What the agent does | Required governance controls |

|---|---|---|---|

| 0 | Informational / Q&A | Provides information or explanations only. No tool usage, no system actions, and no data writes. | Content accuracy standards, approved knowledge sources, disclaimers, lightweight logging, and periodic content review. |

| 1 | Assistive | Drafts, summarizes, or recommends content while a human remains the final decision-maker. | Human-in-the-loop validation, data access approval, prompt/output guardrails, audit logs, and clear business ownership. |

| 2 | Agentic | Uses tools and retrieves data to perform scoped actions within predefined permissions and constraints. | Formal use-case approval, least-privilege access, tool allowlists, continuous monitoring, kill switch, and threat modeling (e.g., MITRE ATLAS). |

| 3 | Semi-Autonomous | Executes actions independently within defined limits, thresholds, and escalation rules. | Executive approval (AGSC), autonomy boundaries, SOC monitoring, incident response playbooks, audits, and residual risk acceptance. |

| 4 | Autonomous | Operates end-to-end with minimal or no human intervention, making and executing decisions independently. | Board-level oversight, continuous real-time monitoring, hard kill switch, independent validation, external audits, and explicit risk acceptance. Often restricted or prohibited. |

Leadership takeaway

AI Governance is not about limiting innovation. It is about ensuring that accountability, controls, and oversight increase in proportion to autonomy and risk. Organizations that scale AI safely treat autonomy as a governance decision, not just a technical feature.

1) AI Governance Committee (AGSC)

Establish an AI Governance Steering Committee with decision authority for AI use cases, model/agent approvals, risk acceptance, and production readiness. The AGSC should sit at the same level as enterprise risk committees, and be empowered to pause deployments when controls are incomplete.

2) Membership & decision rights

| Role | Primary responsibilities | Decision rights |

|---|---|---|

| Executive Sponsor (CIO/CDO/CTO) | Strategic alignment, investment decisions, escalation authority | Go/No-go funding |

| CISO / Security Architecture | Threat modeling, access control, SOC integration, incident response readiness | Security sign-off |

| Legal + Compliance (GRC) | Regulatory mapping, policy controls, vendor terms, audit readiness | Compliance sign-off |

| Data Governance / Privacy Officer | Data classification, minimization, retention, privacy impact assessments | Data access approval |

| AI/ML Lead / Platform Owner | Model selection, evaluation, guardrails, autonomy tiering | Tech design approval |

| Risk Management (ERM) | Risk appetite, impact scoring, residual-risk acceptance process | Risk acceptance |

| Business Owner | Use-case ownership, KPI success criteria, human-in-the-loop validation | Operational sign-off |

| HR / Change Mgmt | Training, role impact, adoption measurement, workforce enablement | Training readiness |

Tip: keep the AGSC small and empowered. Use “on-demand” members (Internal Audit, Procurement, Product Safety, etc.) for specific reviews.

3) Operating model (minimum viable governance)

- Meet monthly during rollout; quarterly when stable.

- Require a named human owner for each agent and workflow (“accountability anchor”).

- Classify autonomy: Assistive → Agentic (tool use) → Semi-autonomous → Autonomous (rare; high controls).

- Approve: data sources, permissions, tool catalog, output guardrails, logging, fallback modes.

- Authorize: “kill switch” and rollback plan before production.

- Run post-incident reviews and mandate control improvements.

Figure — AGSC Governance Flow (Intake → Gates → Approve → Monitor → Improve)

This flow illustrates the minimum viable governance loop for AI and agentic systems: start with structured intake, route through data/privacy/security/legal gates, approve under explicit autonomy limits, then monitor for drift, anomalies, and policy violations. Incidents feed directly into post-mortems and control upgrades.

Executive takeaway

The most reliable pattern across industries is a governance body with real authority + a security framework stack that covers classic cyber controls and AI-specific risks (bias, hallucination, prompt injection, and model integrity).

What “good” looks like in practice

- Inventory: Every agent is registered with owner, purpose, data sources, and permissions.

- Least privilege: Agent can only read/write what the task requires (time-bound tokens where possible).

- DLP + privacy: PII/PHI redaction, blocklists, and secure prompt handling.

- Monitoring: Model & tool calls logged; anomaly alerts to the SOC.

- Fallback: Manual process or “safe mode” if the agent misbehaves.

Quick examples by domain

Use these as “story prompts” when students write leadership memos.

- Healthcare: Agent summarizes claims → strict PHI controls + audit logs + human review.

- Finance: Agent drafts client emails → DLP + banned data types + approvals before sending.

- HR: Agent screens candidates → bias testing + restricted access to sensitive attributes.

- Customer service: Agent refunds → tool constraints (refund caps) + escalation policy.