Building an AI Agent as a Product: From Idea to Production

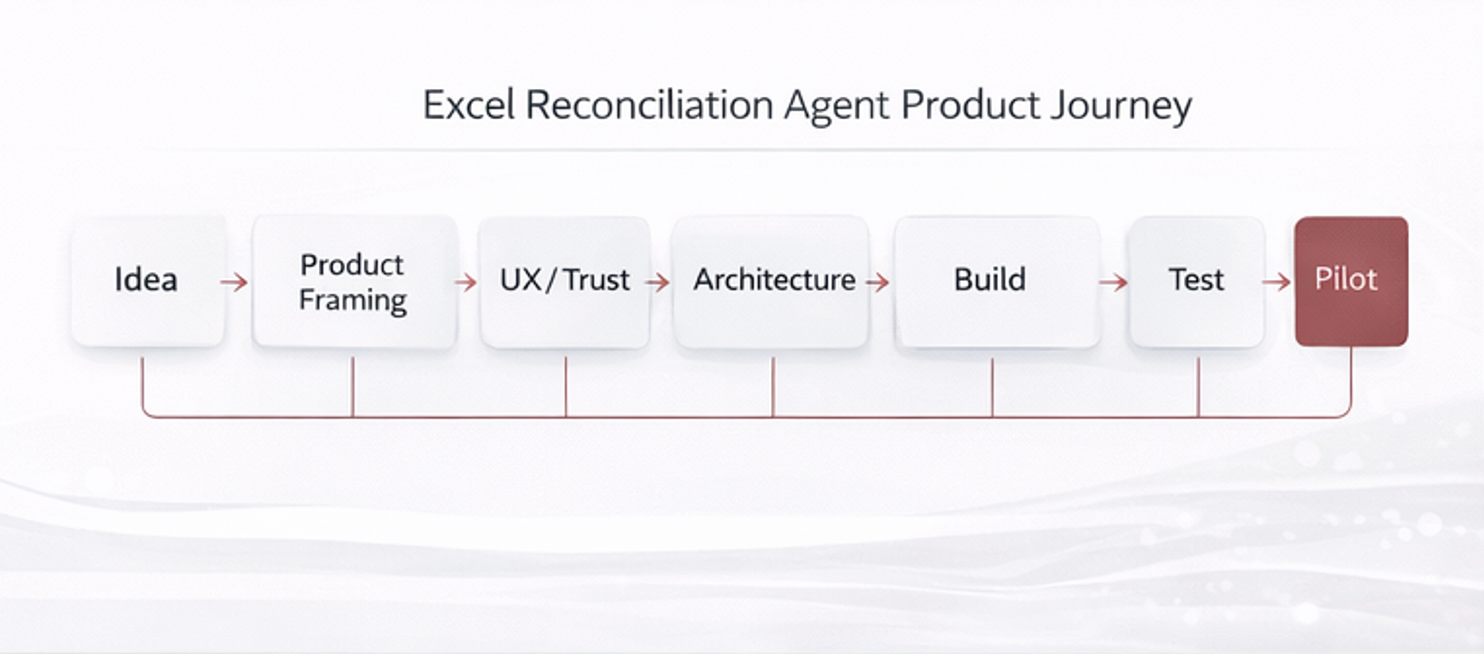

Designing an AI agent should be approached as a product development effort, not as a prompt-engineering exercise.

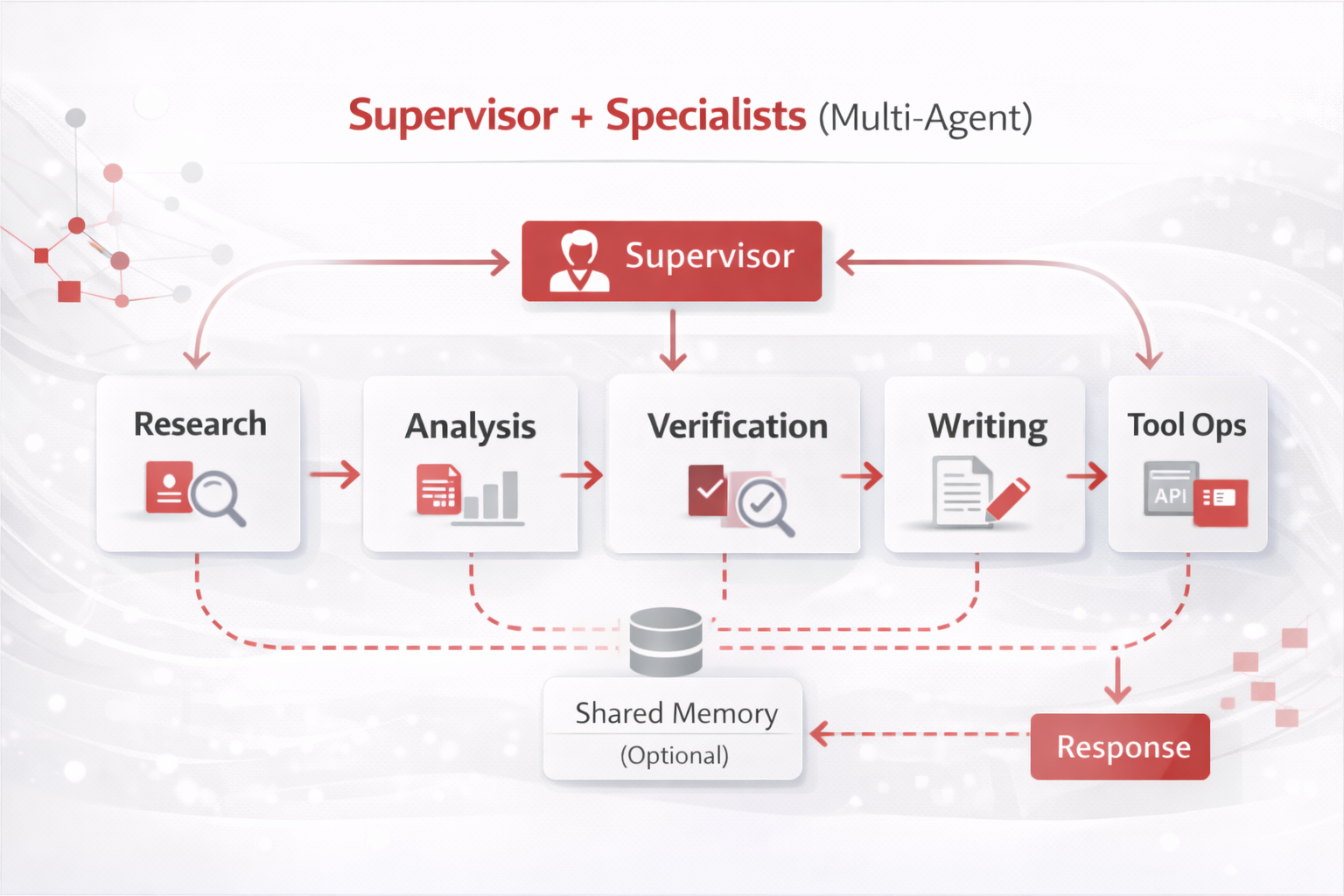

Mature organizations treat agents as software products with clear users, workflows, controls, UX, and lifecycle management.

Below is a detailed, industry-strong process using a real example: an Excel Reconciliation Agent.

Agent type & recommended patterns for this example

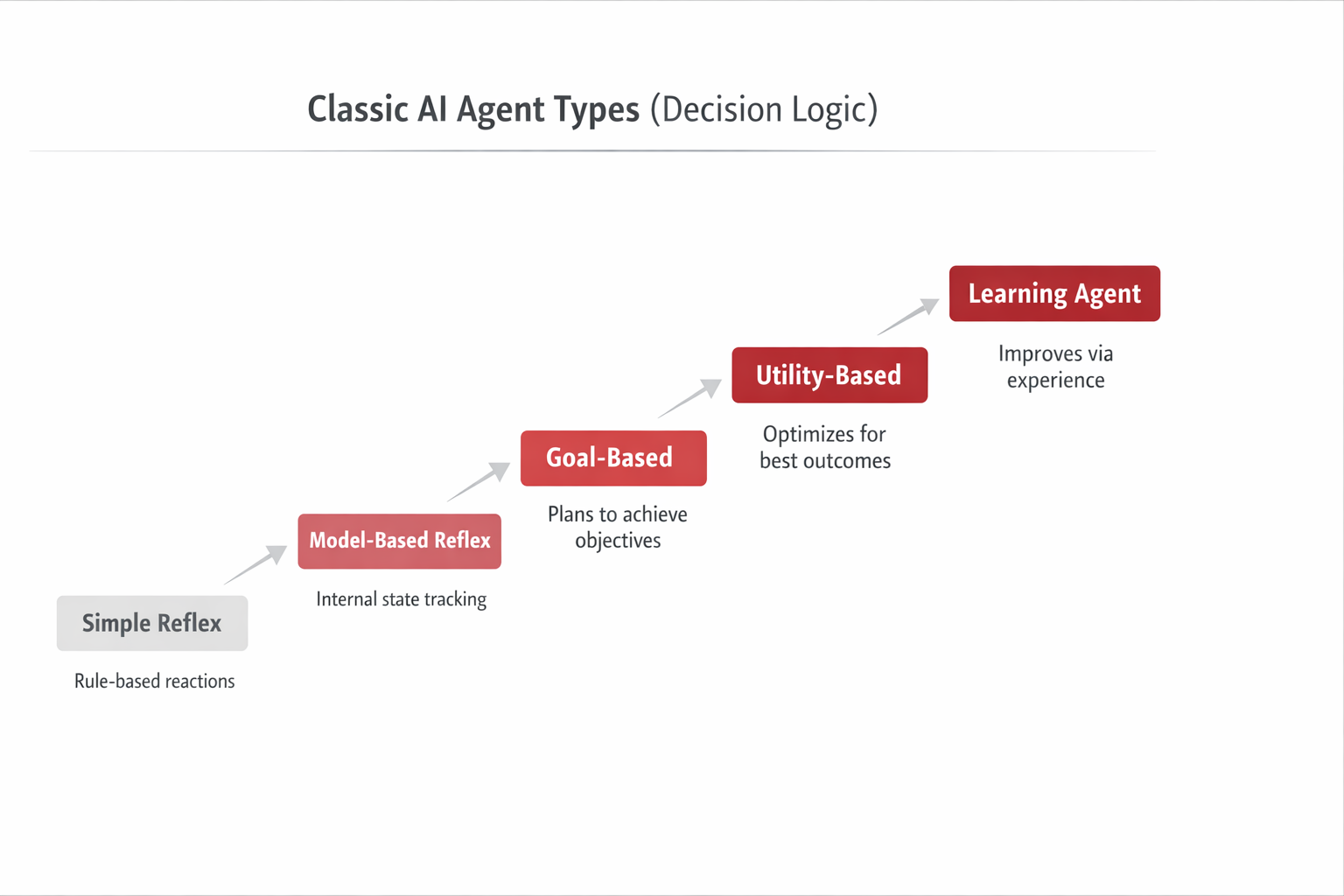

Treat the Excel Reconciliation Agent as a goal-directed operational agent (classic taxonomy: goal-based / utility-aware).

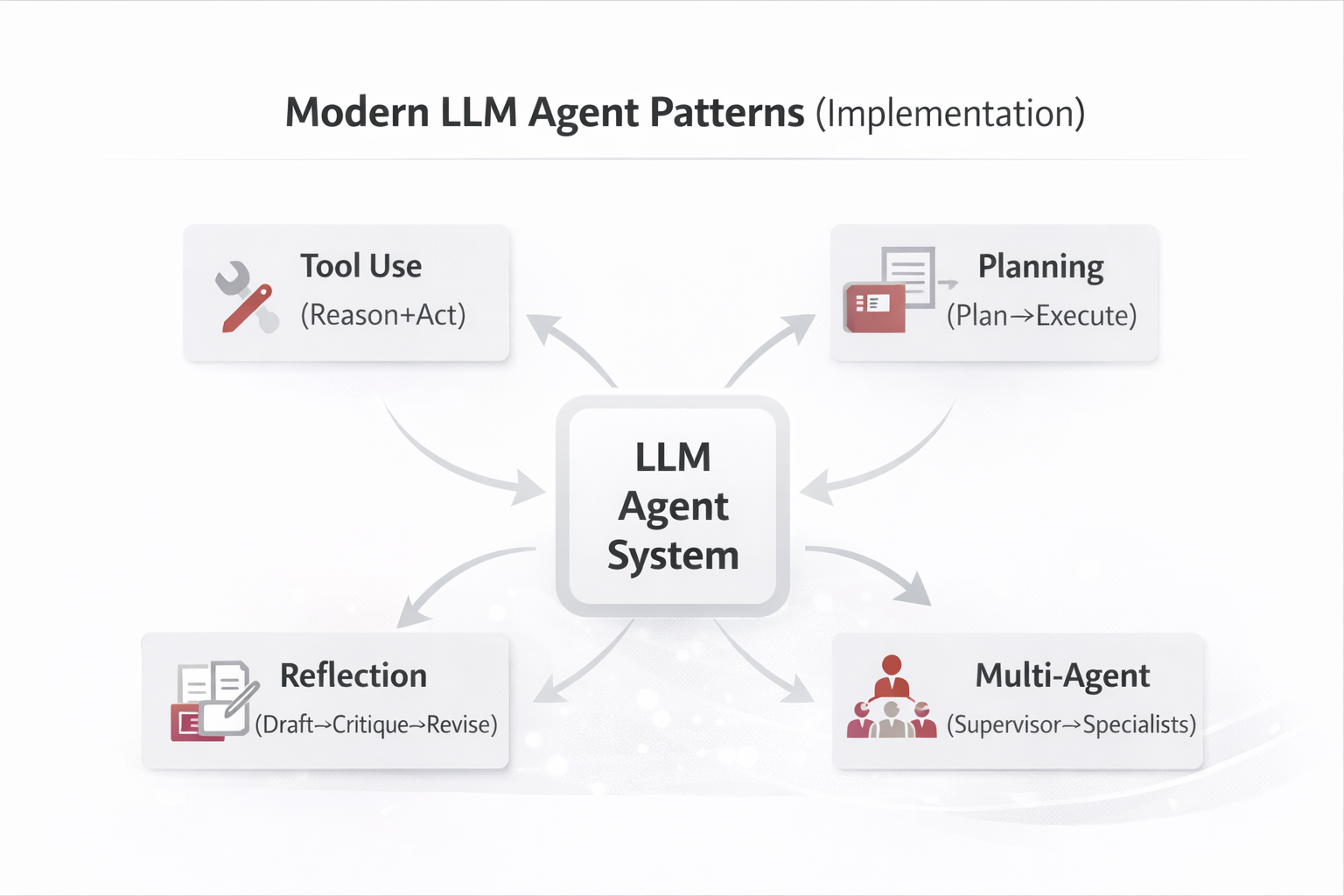

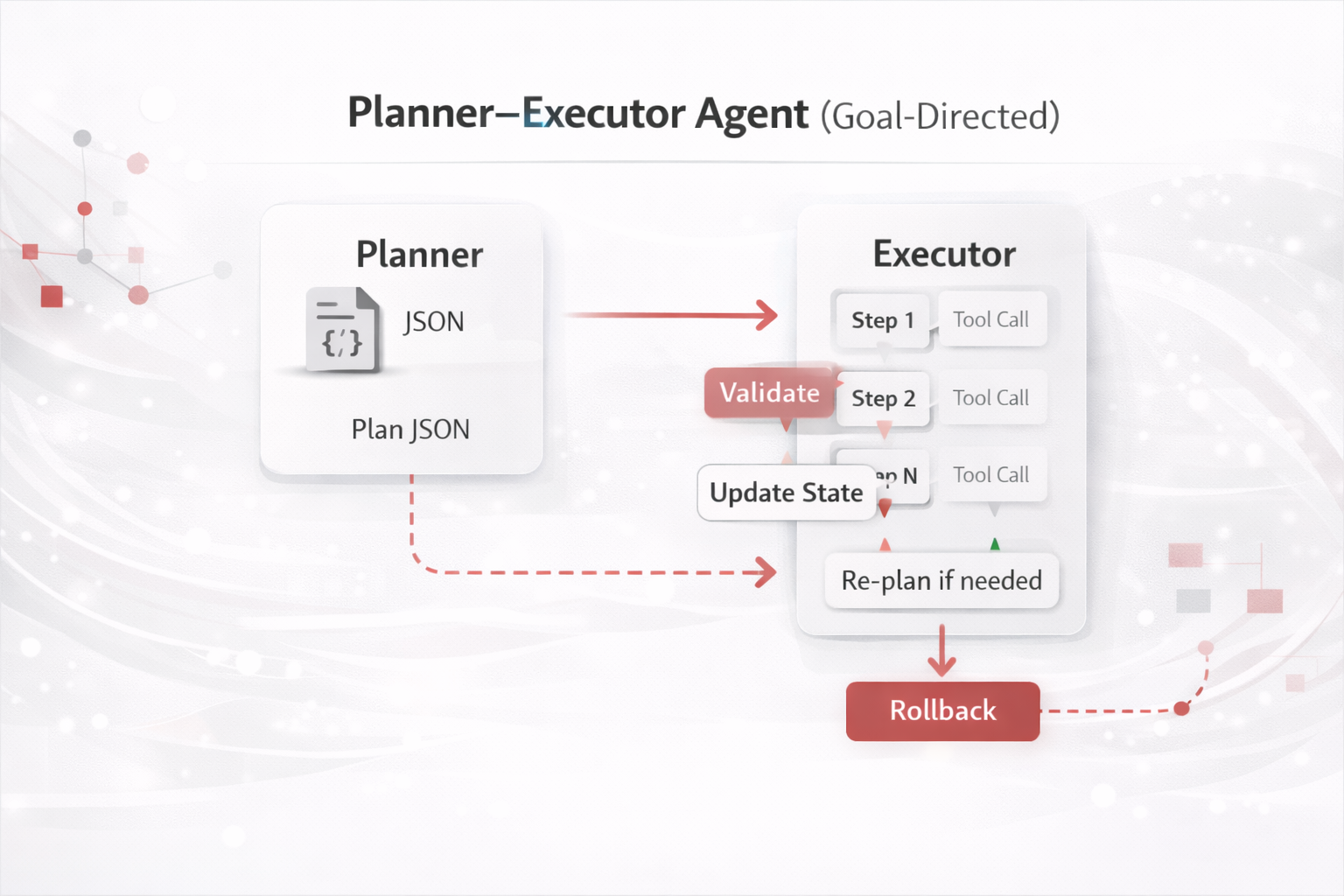

Architecturally, it is best implemented as a Planner–Executor with Tool Use (parsing, matching, exporting),

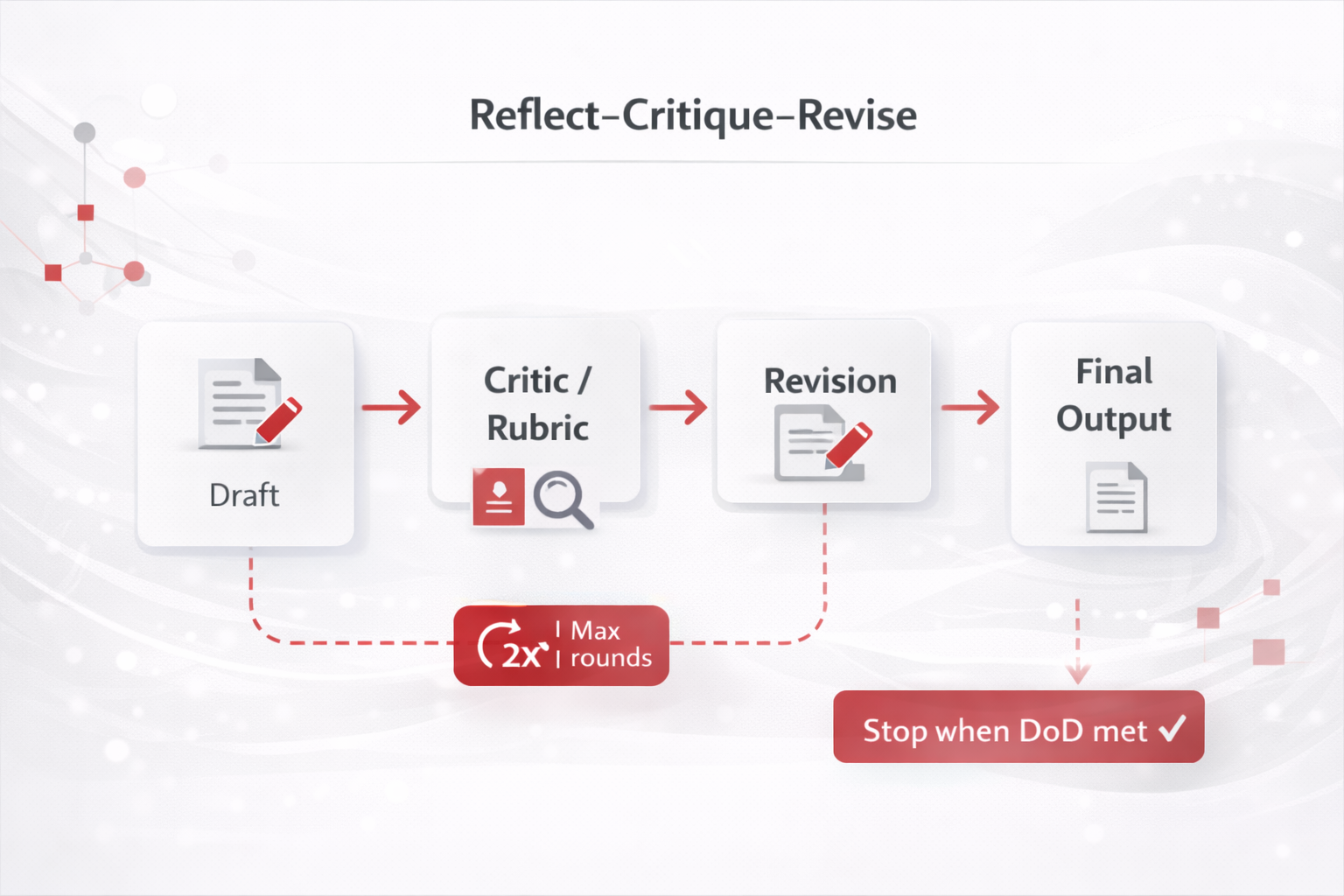

plus a bounded Reflection loop for quality gates. Optionally add RAG if you must ground decisions in policy/SOP (e.g., accounting rules).

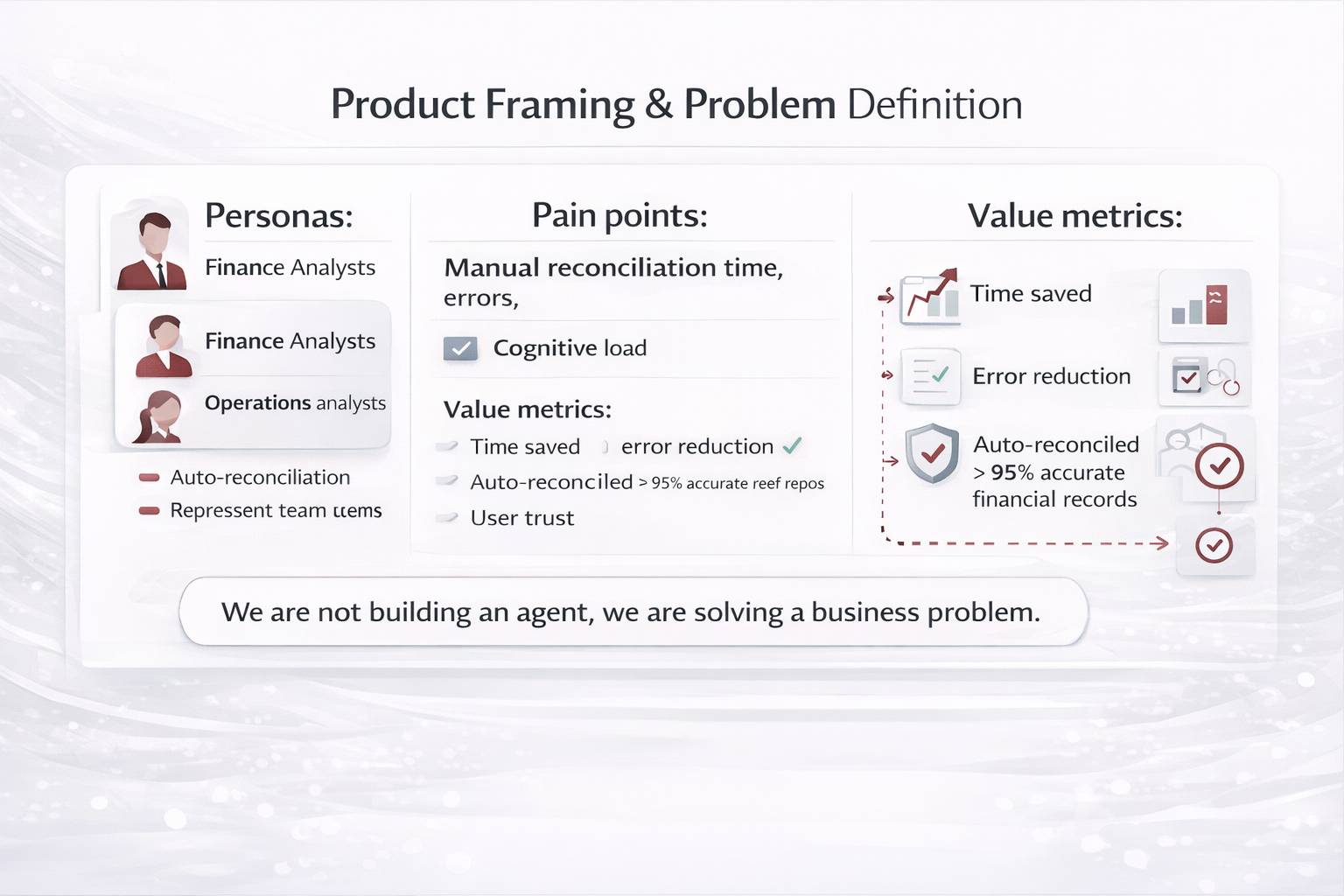

1) Product Framing & Problem Definition

Start by framing the agent as a product with a clear value proposition. For Excel reconciliation, the problem is not

“compare files”—it is: reduce manual reconciliation time, errors, and cognitive load for finance or operations teams.

Who / Why

- Primary users: finance analysts, operations analysts

- Jobs-to-be-done: reconcile heterogeneous Excel files quickly and confidently

- Success metrics: time saved, error reduction, % auto-reconciled, user trust

Patterns applied

Planning (define DoD, constraints)

Reflection (risk/assumption review)

At this stage, “planning” is product planning: scope, constraints, and Definition of Done (DoD) that later becomes testable criteria.

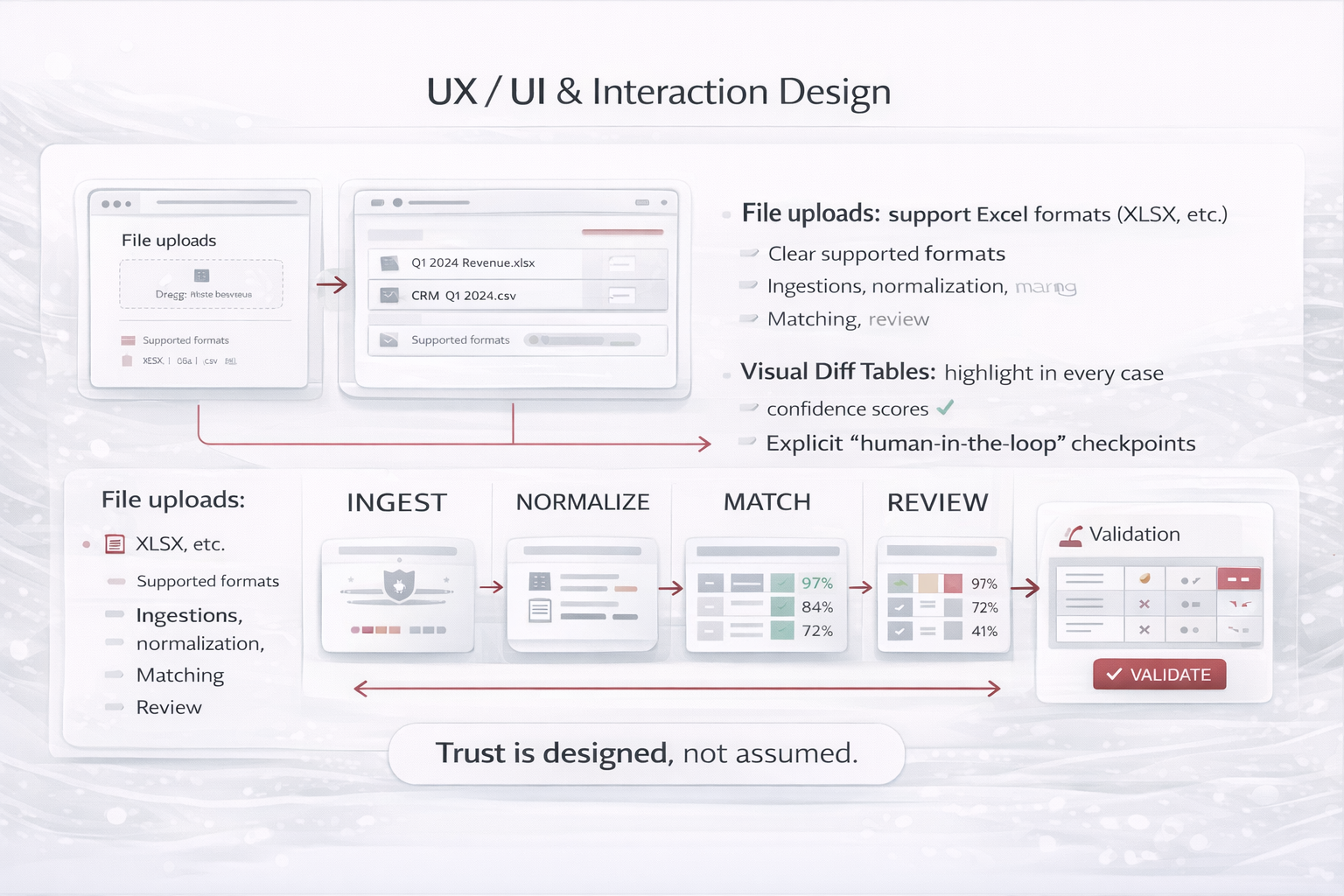

2) UX / UI & Interaction Design

Agents must be designed around progressive trust. Users should always understand what the agent is doing,

what it is confident about, and where human validation is required.

- File upload with clear supported formats and data privacy messaging

- Step-by-step progress indicators (ingestion, normalization, matching, review)

- Visual diff tables highlighting matches, mismatches, and confidence scores

- Explicit “human-in-the-loop” checkpoints for low-confidence matches

UX patterns that build trust

- Explainability: show “why this matched” (signals used)

- Confidence bands: auto-accept (high), review (medium), reject/escalate (low)

- Undo/rollback: users can revert exports or downstream writes

Patterns applied

Tool Use (upload, parse, export)

Safety Gate (confirm writes)

UX is where you operationalize “Safety Gate”: confirmations, previews, and permission checks before irreversible actions.

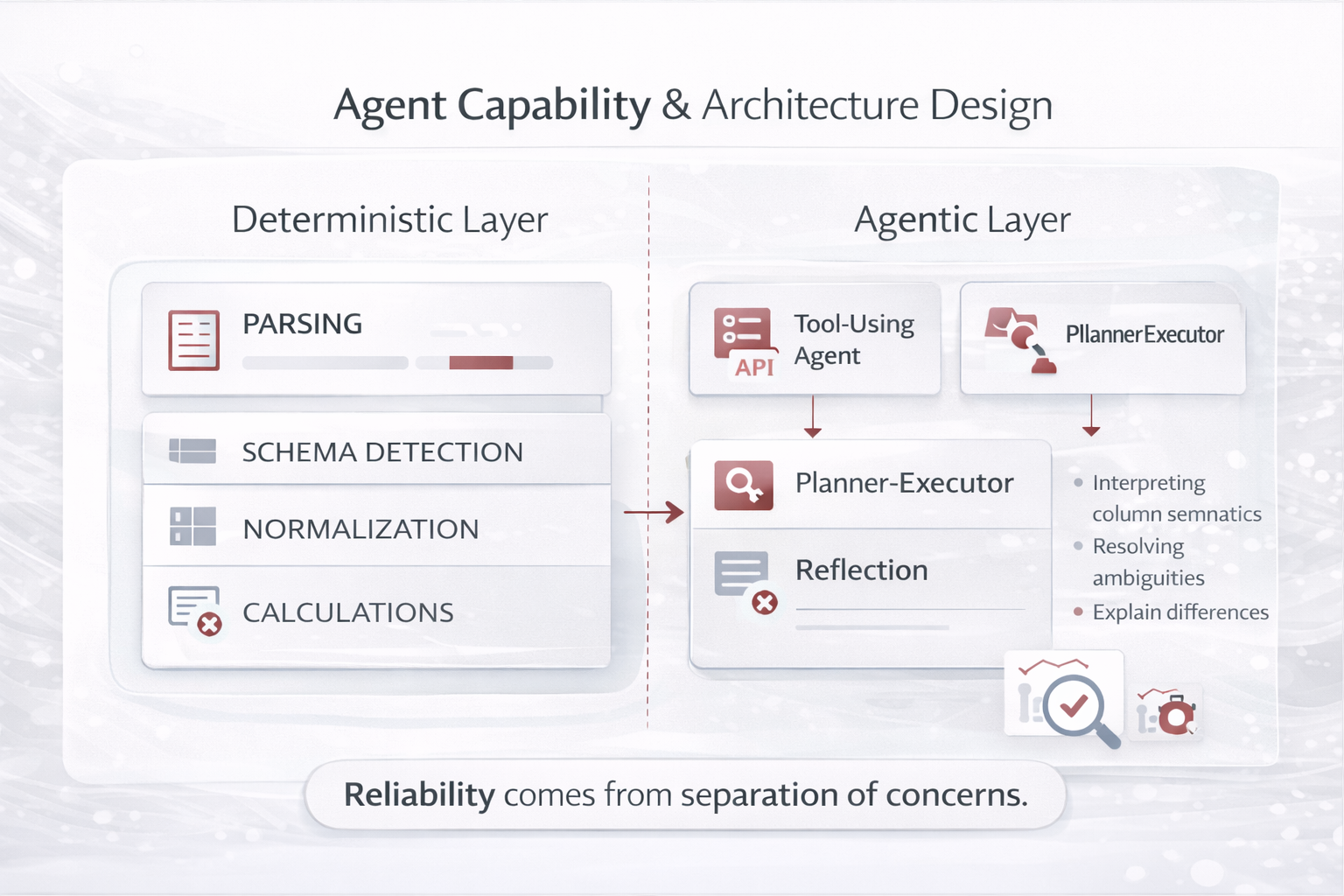

3) Agent Capability & Architecture Design

Decompose the agent into deterministic and non-deterministic components. This separation is critical for reliability and explainability.

In reconciliation, the math and file IO are deterministic; the ambiguity resolution is where the LLM helps most.

- Deterministic layer: file parsing, schema detection, normalization, calculations

- Agentic layer: interpreting column semantics, resolving ambiguities, explaining differences

- Design patterns used: Tool-Using Agent + Planner–Executor + Reflection

Architecture guidance

Keep the LLM on the “interpretation & decision support” plane, and keep

the “data processing & calculations” plane deterministic. This improves accuracy, auditability, and cost control.

Patterns applied

Planner–Executor

Tool Use

Reflection (bounded)

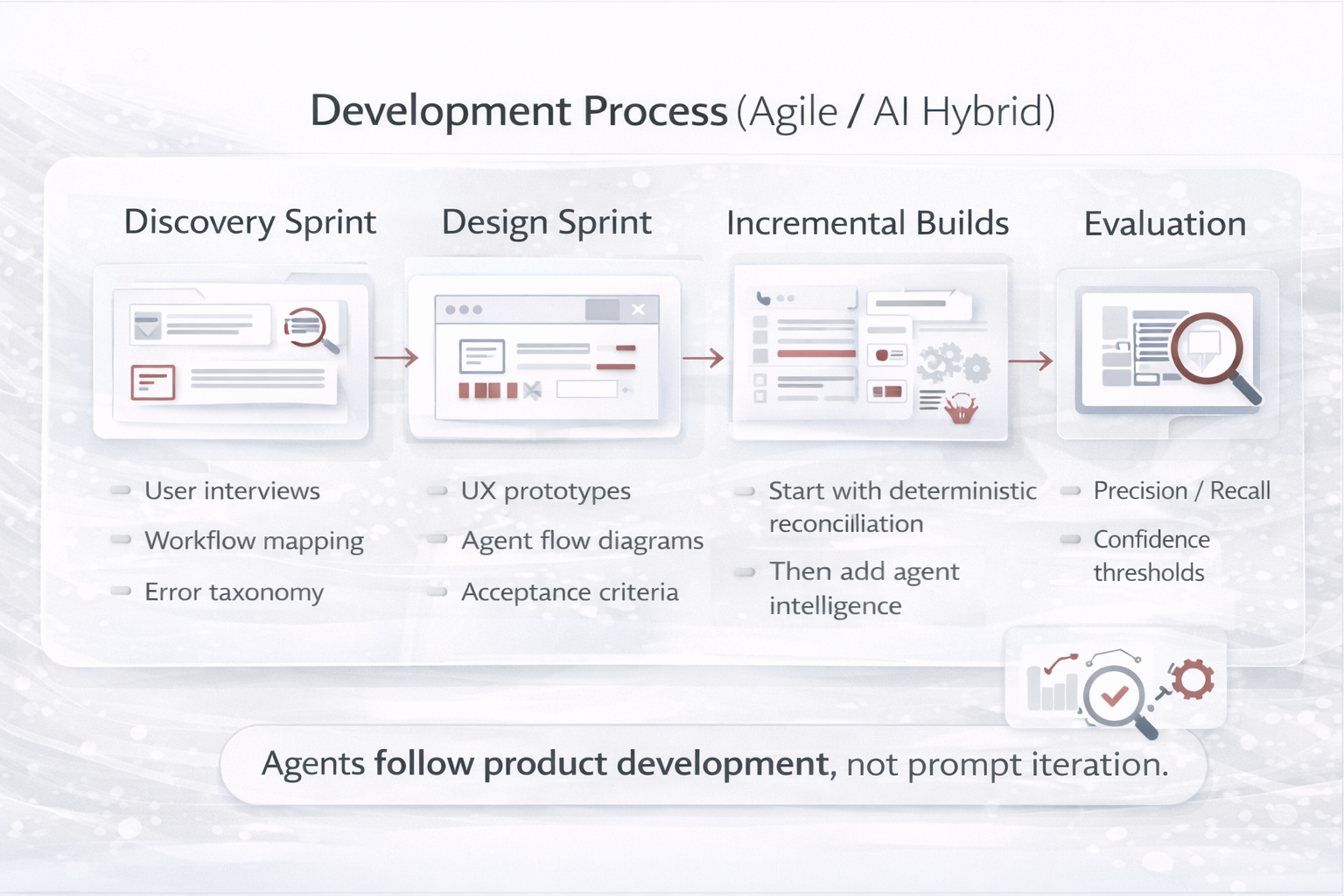

4) Development Process (Industry-Strong Model)

Use a hybrid approach: Agile product development for iterative delivery and stakeholder feedback,

plus AI evaluation loops for reliability. Build the deterministic backbone first, then add agent intelligence.

| Phase |

What you do |

Artifacts you produce |

| Discovery Sprint |

User interviews, workflow mapping, error taxonomy, data sampling |

Personas, journey map, acceptance criteria, dataset plan |

| Design Sprint |

UX prototypes, agent flow diagrams, tool schemas, guardrails |

Clickable prototype, sequence diagrams, tool contracts |

| Incremental Builds |

Deterministic reconciliation first, then agentic ambiguity resolver |

MVP → v1 → v2 backlog, release notes |

| Evaluation Loops |

Measure false positives/negatives, confidence thresholds, regressions |

Eval dashboards, golden sets, test reports |

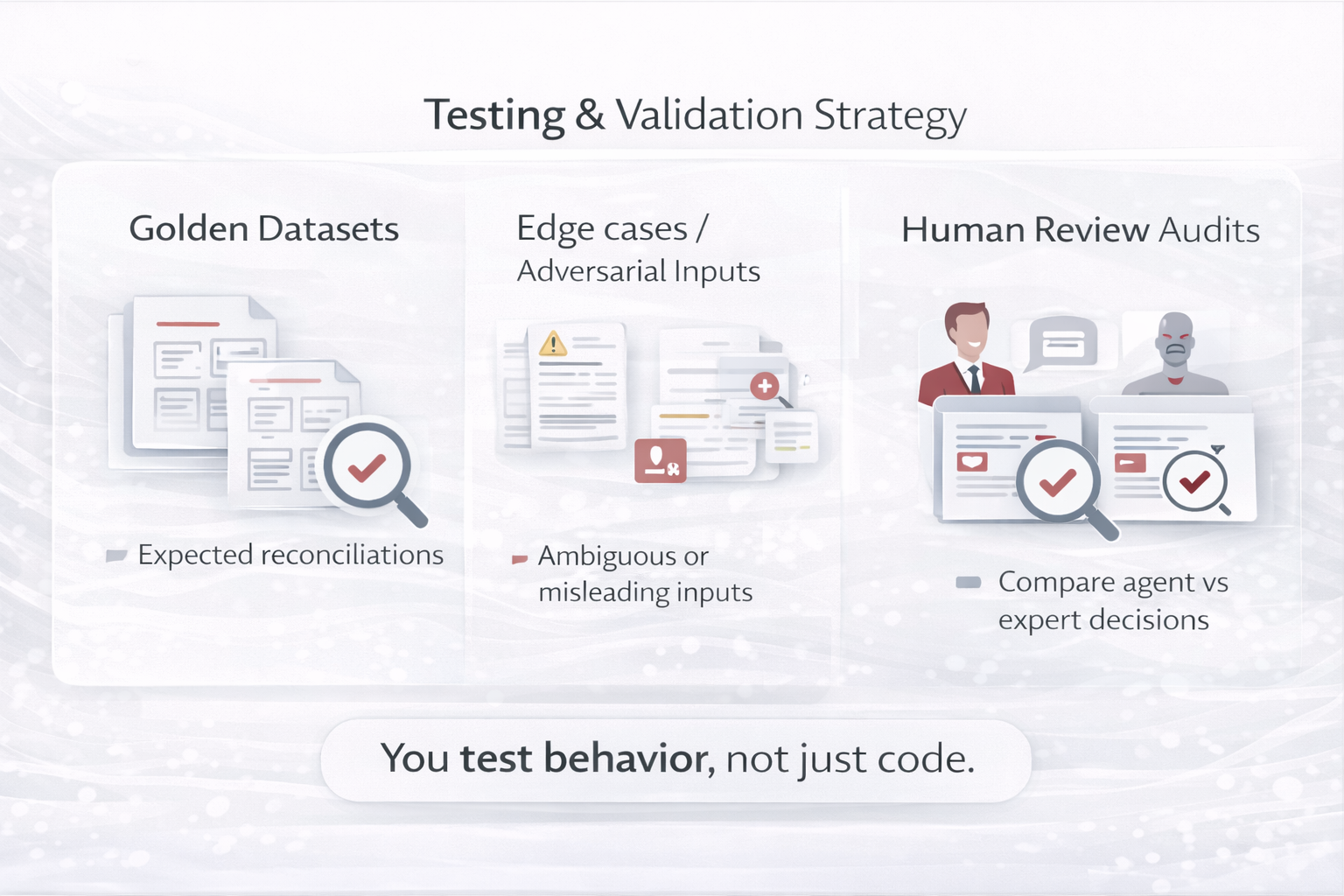

5) Testing & Validation Strategy

Testing an agent goes beyond unit tests. You must validate behavior, robustness, and trust—especially because

input variability is the norm in Excel-based processes.

Testing layers

- Deterministic tests: parsers, calculations, format conversions

- Behavioral tests: matching decisions, explanations, confidence calibration

- Safety tests: permissions, injection, data leakage, write protections

Patterns applied

Reflection (critic rubric)

Deterministic Validation

Reflection is used here as a structured reviewer (rubric) and bounded iterations—paired with deterministic checks.

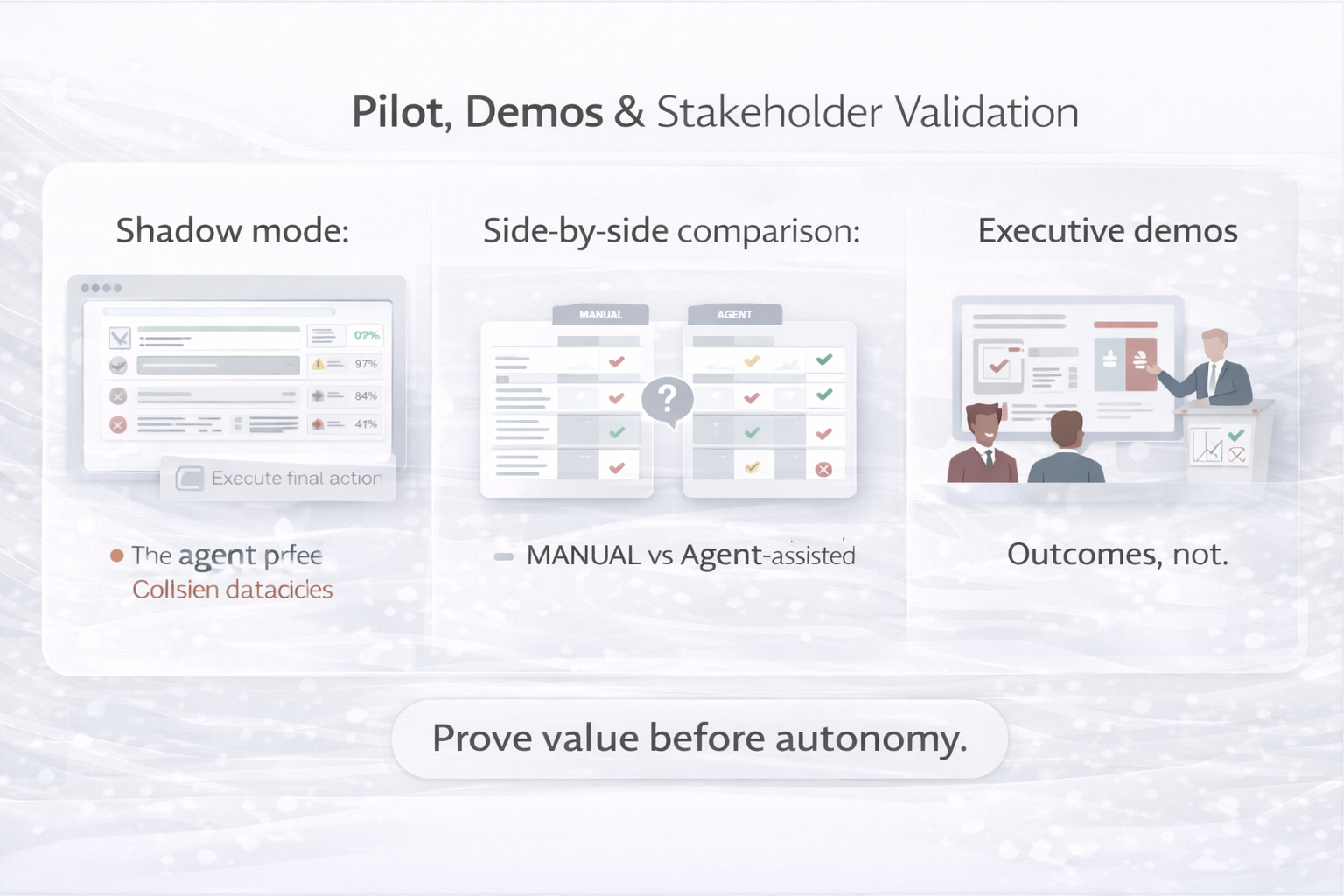

6) Pilot, Demos & Stakeholder Validation

Pilots should be run with real users and real data, but under controlled conditions. Strong pilots focus on

evidence: accuracy, time saved, trust, and operational readiness.

- Shadow mode: agent produces results without executing final actions

- Side-by-side: manual vs agent-assisted reconciliation (time + error metrics)

- Executive demos: outcome-first storytelling with traceability (what, why, evidence)

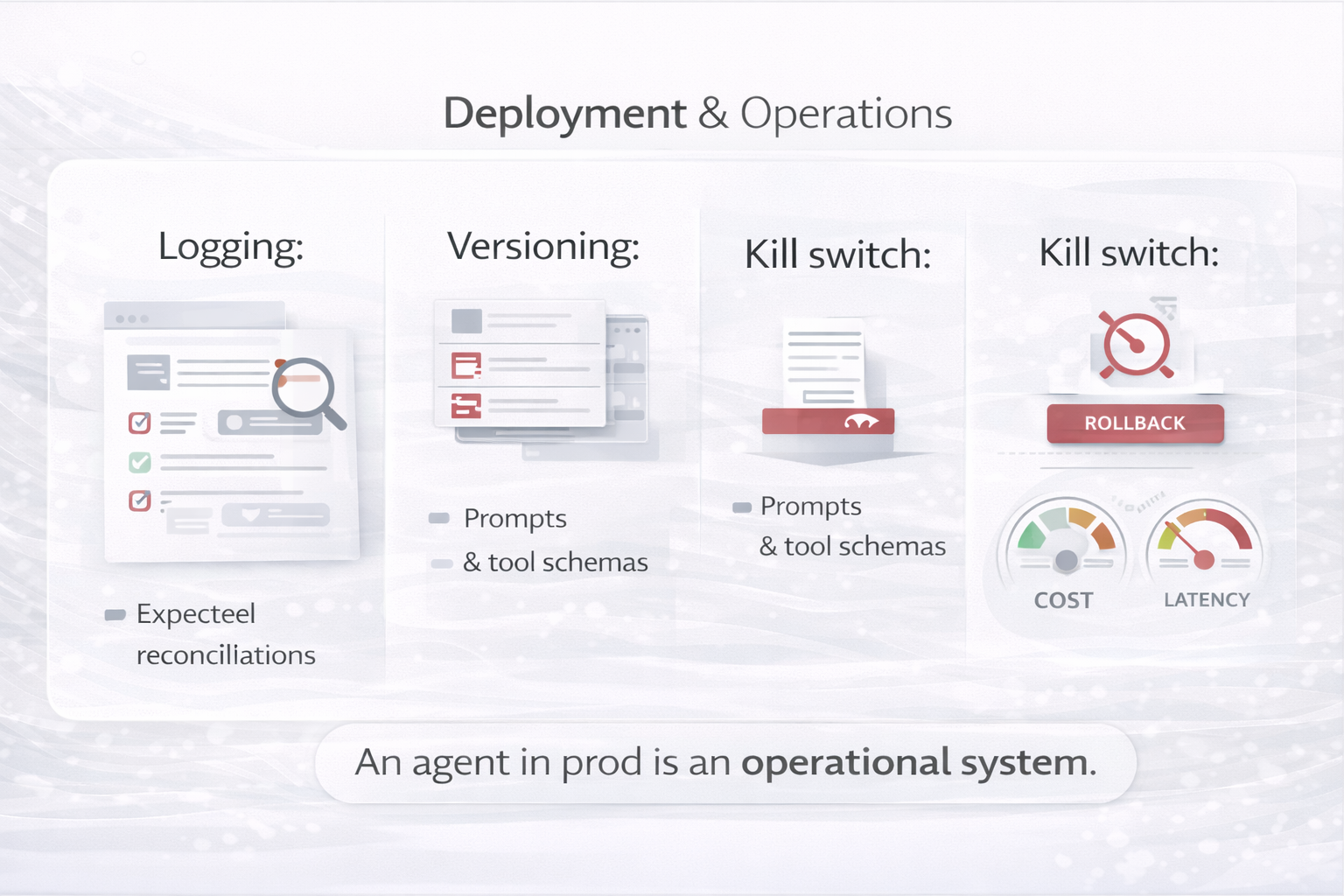

7) Deployment & Operations

Production deployment requires operational discipline. Agents must be observable and governable.

Treat prompts, tool schemas, and workflows as versioned artifacts.

Operational controls

- Versioned prompts and tool schemas

- Logging of decisions, tool calls, and confidence levels

- Rollback and kill-switch mechanisms

- Cost and latency monitoring

Patterns applied

Safety Gate

Tool Use (least privilege)

Multi-agent (ops roles)

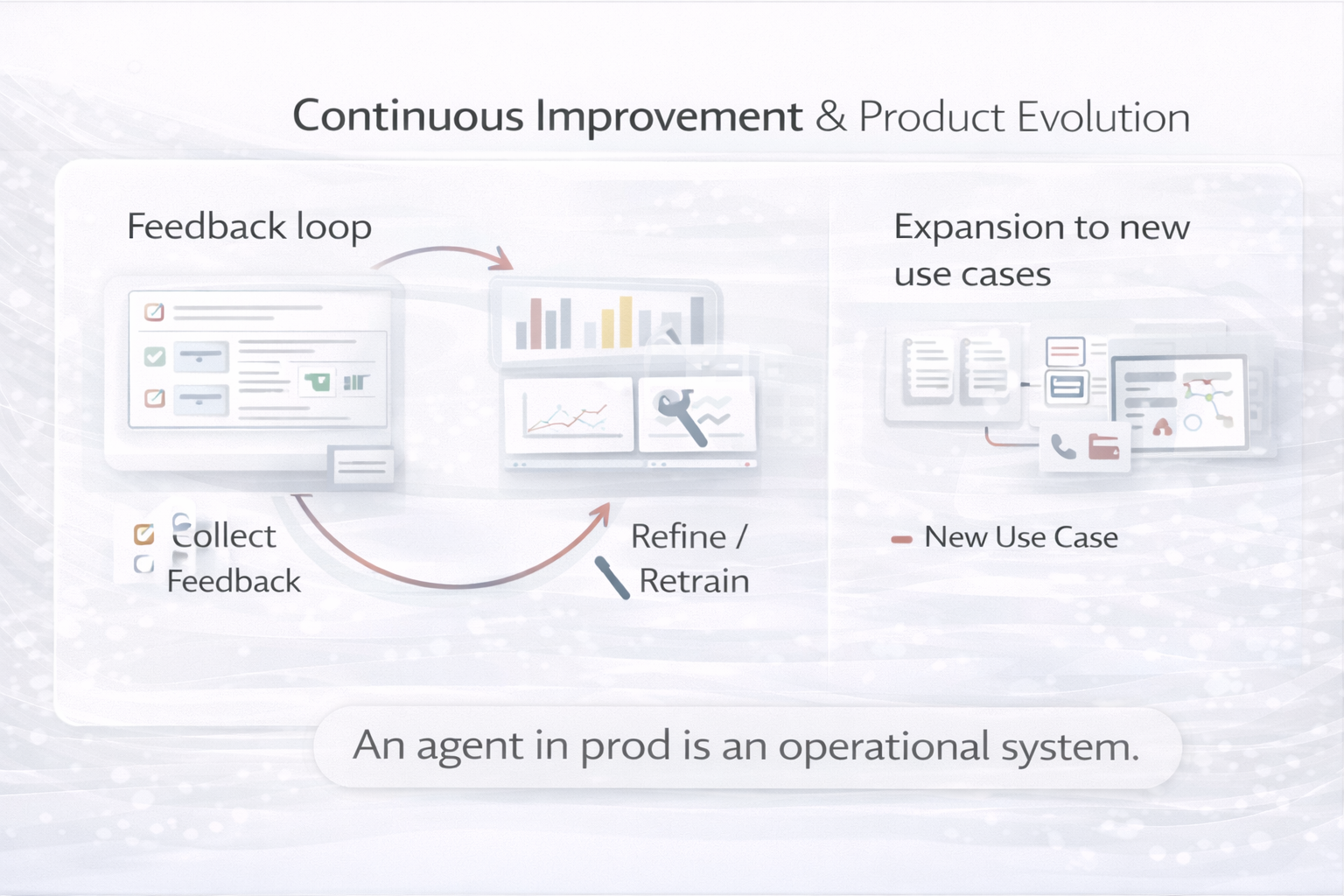

8) Continuous Improvement & Product Evolution

Successful agents are never “done.” They evolve based on user feedback, new data patterns, and changing business rules.

Build feedback and learning loops into the product from day one.

Continuous improvement mechanisms

- User feedback loops embedded in the UI (“Was this match correct?”)

- Periodic prompt refinement and eval set expansion

- Expansion to adjacent use cases (multi-file, multi-system reconciliation)

Patterns applied

Reflection (post-run review)

Planning (roadmap iterations)

RAG (policy updates)

Key takeaway

Building an AI agent is a product journey. The strongest teams treat agents as long-lived digital products—combining UX design,

engineering rigor, governance, and continuous evaluation. The result is an agent people trust, not just a demo.